Social XR - extended reality in a social setting - allows physical and virtual worlds to blend, making distances between people almost disappear. Imagine a family member on the other side of the world, someone you now talk to over a video call. As the technology develops, that person could one day appear to be sitting next to you at the kitchen table in a virtual 3D form, or you could walk through a virtual museum together.

That scenario is not quite within reach yet. But for many years, it has been possible to step into the body of an avatar and enter an online world where you can meet like-minded people. During the Spring School on Social XR, four researchers gave public lectures on both the promise and the risks of these developments.

Inside the content

In her talk, Professor Yvette Wohn explored safety and trust in embodied online spaces. Wohn leads the Social Interaction Lab at the New Jersey Institute of Technology and studies human-computer interaction from both psychological and sociological perspectives.

“Some people talk about the virtual world and the ‘real’ world. But what happens in virtual reality is also real,” she says. “It is not the same as reading a book or watching a film. In those cases, you separate reality from content. In XR, you are inside the content. Because of that embodied aspect, many of the issues you encounter outside the virtual world are almost the same inside it.”

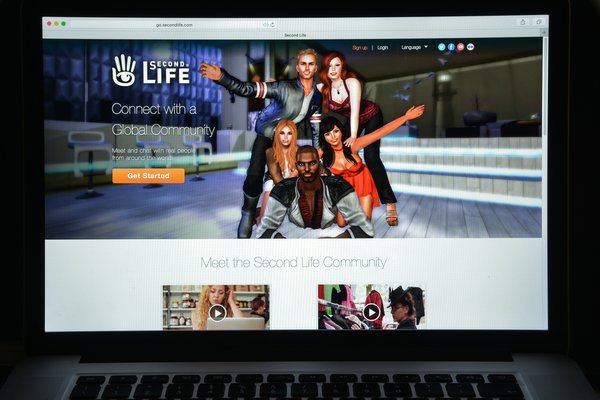

Wohn’s own introduction to VR began in the early 2000s with Second Life, the 3D virtual world where users could socialize, build and trade through an avatar. “As with almost any technology, you can do good and creative things with it, but also bad things,” says Wohn, who has a home there herself. She and the creators of Second Life soon found that rules were needed. Next to her virtual home, someone built a tower covered in advertisements for escort services and sexual services.