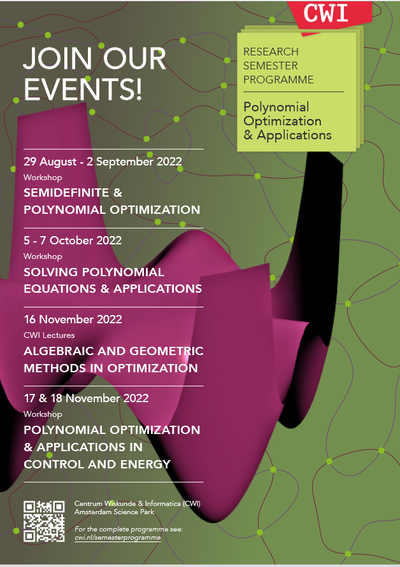

What is polynomial optimization?

Polynomial optimization is an exciting new field, which emerged in the last two decades. Building up on cross fertilization between mathematics, theoretical computer science and engineering, it has seen recently a spectacular development, with a growing number of potential application areas and a growing community of researchers, largely due to its wide applicability and versatility. The aim of this research program is to highlight some of these recent developments.

Polynomial optimization deals with optimization problems involving polynomial objective and constraints. These are computationally hard problems, in general nonlinear and nonconvex, ubiquitous in diverse fields and application areas such as discrete optimization, operations research, discrete geometry, theoretical computer science, control theory, and quantum information. Polynomial optimization offers a versatile modeling framework, able to capture decision variables that can be scalar integer- or continuous-valued (useful for applications in discrete and continuous optimization), as well as matrix- or operator-valued (useful for applications in quantum information), or measure-valued (useful for applications in control).

Dedicated solution methods

The key idea is to exploit algebraic and geometric properties of polynomials and develop dedicated solution methods for polynomial optimization problems. These methods are based, on the one hand, on real algebraic results about positive polynomials, and, on the other hand, on functional analytic results about moments of positive measures.

They are rooted in early work by Hilbert (about sums of squares) and by Stieltjes, Hausdorff and Hamburger (about the moment problem) at the turn of the 20th century. Of particular relevance is Hilbert 17-th problem, in his famous list of 23 mathematical problems posed in 1900, which asks whether every nonnegative polynomial can be written as a sum of squares of rational functions, answered affimatively by Artin in 1927.

These techniques permit to design hierarchies of convex relaxations, known as sums of squares hierarchies and Lasserre moment hierarchies, that deliver efficient approximations converging asymptotically to the optimum solution of the original optimization problem. The computational paradigm underlying these relaxations is semidefinite optimization, a far reaching generalization of linear optimization, which boils down to linear optimization over the cone of positive semidefinite matrices and admits efficient algorithms. Semidefinite optimization indeed permits to model sums of squares of polynomials (used as a proxy to certify polynomial positivity) as well as positivity conditions for moments of measures. These key principles have led in the recent years to novel algorithmic methods permitting to attack a large spectrum of hard problems within various application areas, as will be covered by the events in this research program.