With MediaScape XR, visitors can freely walk through the virtual 3D museum of Sound and Vision, and specifically, interact with the 3D recreation of a single cultural artefact: a costume once worn by Jerney Kaagman, the frontwoman of the Dutch rock band Earth and Fire, for the performance of their song “Weekend” on the television program TopPop in 1979. The whole experience is divided into two main parts: touring the museum and reliving the history. In the virtual museum, visitors could freely observe the 3D costume from different perspectives and scales, and read the anecdotal stories. After that, they would take a “time travel” to the costume’s history, that is, the TopPop show in 1979. Immersed in the historical setting, visitors could try on the shiny blue suit and recreate the fantastic musical show in their own style.

Research and Design process

The MediaScape XR experience was designed with a user-centric approach, collaborating with curators from Sound and Vision as well as museum lovers. We conducted focus group sessions and co-creative workshops with them to understand the stakeholders’ motivations, gather design requirements and collect novel ideas.

By interviewing curators, it was decided to focus on one specific artefact, the costume. This artefact is firmly connected to the country’s cultural memory and hence brought more popularity and better storytelling. Moreover, it allows for more immersive and fun experiences in VR, such as trying on the suit. A list of design requirements was identified from the research results, while also the interaction and the user experience flow were decided. Following our findings, the team created the digital 3D reproductions of the artefact, museum, TopPop musical hall, as well as other exhibit elements. In addition, it was decided what kind of guide information and educational content will be shared with the visitors. We wanted to make sure visitors understand the story behind the artefact, technology and the context of this experience.

Technical infrastructure and setup for VRDays

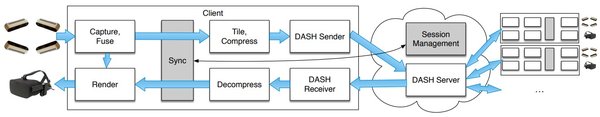

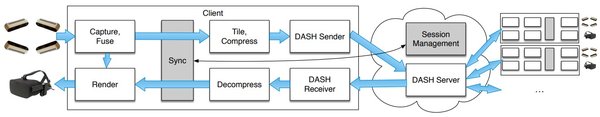

Mediascape XR is a SocialVR application built on top of the VRTogether platform that allows us to connect multiple users in the same virtual space. The application, developed in Unity3D engine, allows this multi-user setup. To capture the users in real time, we use the cwipc framework. This framework consists of a set of libraries and tools capable of generating a full pipeline for volumetric communication between multiple clients. It performs capturing, encoding, transmission, decoding and rendering of point clouds in real time.

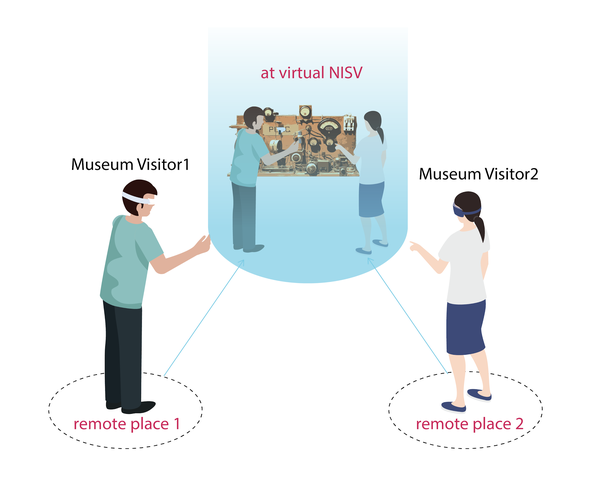

The specific setup we used to showcase the system at VRDays consisted of two users. Each user was captured by three 3D sensors and was wearing an HTC VIVE PRO headset with controllers assisting them to navigate and interact in the virtual space. Although for the demo at OBA two users were placed side by side, it is not a local network communication, meaning that the setup can also work from 2 remote locations (for example one user in Paris and the other in London).

Vision

Compared with the traditional way to access museum archives such as websites, MediaScape XR offers a novel experience, immersing visitors in the environment and enabling them to have a close-up observation and interaction with the artefact. In MediaScape XR, gamification, education, and socialisation are well integrated. The ultimate goal of MediaScape XR is not only to digitise museum artefacts for virtual 3D worlds, but to make them more accessible to a wider dislocated audience, with visitors being able to interact in real-time with each other, while breaking the 2D screen We hope that through these types of technologies, we might be able to encourage everyone to visit museums together, independently of their location, more frequently.